Archive

Whisper interpreting with radio transmitter/receiver (“bidule” by williamssound.com)

We have:

Usage:

- Speaker speaks

- Interpreter

- listens to speaker in room

- wearing one of the transmitters, whisper interprets into target language channel 1,2or 3

- delegates

- wearing receiver and headphones, listens to one of the interpreter channels

Troubleshooting:

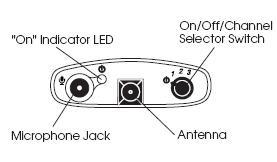

- If you cannot send/receive audio at all, check

-

Channel selector on top of unit: make sure you are on the right channel

-

Power indicator light is on? If not , adjust batteries in compartment at rear of unit

- If you cannot send/receive audio well, check

-

Transmitter:

- microphone adjustment wheel under rear panel door

- position yourself and the microphone so that there is no ambient noise going into your (sensitive) microphone, especially not from the speaker

-

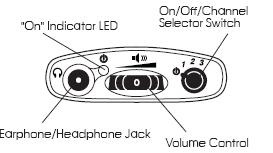

Receiver: Volume wheel on top of unit

Language Lab Techniques for (Self-)Evaluation and Grading of Student Recordings with Audacity

This quick and dirty (not narrated and uncut: time is money, and storage cheap…) video demonstrates a technique in (the free audio editor) Audacity with which instructors and students can more easily (self-)evaluate parallel recordings from (be it model imitation, question-response, or consecutive interpreting exercises in) the language lab (in this case the output of a Sanako Study1200, which automatically gets stored in a folder on network share):

|

When? |

What? |

0,00 |

how to load 10 student files à 5mb = 2:30min (but as a batch, allowing you do something else in the foreground instead of waiting) |

2,50 |

how to select a part of the timeline to play |

3,00 |

how to move tracks up to more easily work with them and the menu |

3,30 |

how to play all tracks simultaneously (choir, normally not very useful for evaluation) |

3,40 |

how to play only one track (solo): evaluate & compare |

Network shares: What you need to know

|

NetworkPath |

Mapped as for |

Staff can |

Student can |

Language services use | |||

|

Staff |

Student |

read |

write |

read |

write |

| |

|

\\lgu.ac.uk\lgu$\londonmet departments |

J: |

|

yes |

yes |

no |

no |

\Humanities arts and languages (HAL)\Language_services: documentation for internal use |

|

\\stushare_server\StuShare |

K: |

S: |

|

|

yes |

|

\Humanities, Arts and Languages\Language_Services: public documentation ( including services manual) |

|

\\lgu.ac.uk\lgu$\misres$ |

M: |

|

|

|

|

|

|

|

\\lgu.ac.uk\lgu$\apps07 |

N: |

N: |

|

|

|

|

|

|

\\lgu.ac.uk\lgu$\multimedia student\mmedia\mmedia1 |

O: if requested |

O: if requested |

some(if not, request access) |

some |

Yes |

no |

\Language_services: (large) multimedia files (including teaching materials video clips) |

|

\\lgu.ac.uk\lgu$\multimedia student\mmedia\mmedia2 |

|

|

some |

some |

no |

no |

|

|

\\datacty01\staff$ |

S: |

|

yes |

no: todo |

|

|

TBA |

|

\\venus\homes |

X: |

|

|

|

|

|

|

|

\\misres01\eureka |

Y: |

|

yes |

no |

|

|

|

Learning materials management: Offline resources (2005-2006)

AKA books, shiny disks, VHS and – oh my! – cassette tapes. All come with shelves. Yuck! Where is Google Books, when you need it?

The media library I had to work with had, as I found it, a content specific labeling system and a language specific sort order on the shelves. This seems an anti-pattern in many modern languages departments: try to avoid complexity by isolating yourself. 1st degree: each language program on its own; 2nd degree: each instructor on his/her own. Atomization leads to idiosyncrasies and duplication of efforts (which must result in lowering of standards, despite, no doubt, individual toiling).

Trying to find an easy answer for complexity: I am afraid I quickly had to throw overboard the suggestion to implement the Library of Congress labeling scheme. I also abandoned trying to represent in one physical order what has to be viewed under multiple perspectives. I introduced a unique id labeling scheme based on a a simple numerical counter, where each new item would be added to the end of the stacks with a label equaling max(counter) + 1, and as a new row at the bottom of an Excel spreadsheet, which supported all discovery and lending with sort, filter, search.

And here is a partial screenshot of the offline_resources.xls:

Way too much complexity still remained: too many fields, all types of resources had to be coerced into records of the same format (hand-coded an access database for records to avoid this requirement – don’t go there!). Should have relied more on full text search, even with the simple regular expressions that come with Excel.

However, the sheet was open all day on the lab assistant’s computer behind the reference desk and worked pretty well, or was at least a major improvement. Remaining issues: speed of spreadsheet (too many complex ISBN validating formulas), lab staff training, more so instructor training (if they did not want to rely on lab staff entirely or on trying to browse the physical stacks looking for a physical order where there was no such system any more: change management problems).

Learning materials management: Online_resources.xls II: E-repository (2006-7)

I participated in the implementation of a “ learning object” repository – is there such a thing as a learning “object” in a progression-oriented field like SLA? Anyhow, the software of choice was Equella which, as I read on the listservs, is favored by Blackboard Admins for its Blackboard module and is supposed to provide the primary interface to the equella for instructors in their Blackboard course websites.

Since this did not get implemented during my time, we used what seems primarily the admin-interface and, since equella does not come with one, attempted to implement a metadata schema, based on the prior work of an LLAS-sponsored group. We also soon found that despite complexity, the metadata schema was still lacking (E.g. you won’t get through French 101 without several sections on “Negation”. nor German, nor Spanish etc.).

Excel to the rescue once more: Here is a spreadsheet in action that not only allows adding, tagging, searching and filtering links to, once more – easier than to make your own – web-based exercises, but now also allows the collaborative building of a metadata schema. But alas, the number of fields is growing again.

Learning materials management: Textbook exercises (2000-2008)

Textbook exercise management is a rapidly evolving field, with more textbook becoming digital and online resources and more metadata getting added and AI getting implemented to enable personalized (data-driven, feedback-based) learning paths.

German.xls was an attempt to be able to sort, search, filter the exercises of some bigger textbooks in the American college market, each containing thousands of exercises (how many? why does it take a sumif() to find out?):

Subtitles.xls converted text files with movie subtitles which can be extracted from DVDs or found into spreadsheet for post-processing (search, filter, sort – and assign different show times, for DVD editions differ).  online,

online,

Auralog Tell me more 7 is a language program that allegedly comes with “more than five times the amount of content than other language programs” – but strangely not with a table of contents of its exercises. Automation extracted the exercises first into the file system for full text search with Windows Desktop Search, then converted the extracted files into links in the Auralog Content XLS.

Learning materials management: Links (1998-2004)

Originally implemented for a series of Canadian universities teaching Wirtschaftsdeutsch, then continually expanded into all of German for Queen’ s University, and multiple languages, including non-western, for university of Michigan-Dearborn and Drake university.

Was based on an open source software project by Gossamer Threads popular for web 2.0 precursors of collaborative links collections, whose Perl-CGI code needed only minor modification to facilitate the “”commenting”” on instructor-“posted” ( i.e. assigned links) by students.

The model was Yahoo’s human-edited web-catalogue. the data structure was the tree (nested folders, unidirectional graph). For managing, I implemented a secondary branch mirroring the primary under the root “old links” for, using Perl regex, automatically moving links which a batch link-checking management script in the open source had identified and logged as “broken” (404 and a few other similarly bad http return codes) into.

The original layout of the “ontology” first introduced me to the complexity of such a task. The basic content division was between 2 branches.

- web-based ready-made teaching materials for commenting (recommending, categorizing) by instructors and self-access by students (no feedback of student data to the instructor mostly, except by email, and outside of the application, in those days).

- the other content branch consisted of not teaching-related “”authentic materials””: the early day web applications, sometimes multimedia (maps, audio and video collections, news), often times also self-service database interfaces (online shopping and public services) whose language-wise rather restricted interface and topical focus (think Wirtschaftsdeutsch) lent themselves to capstone exercises at the end of textbook chapters (our “Friday in the lab””, not even a language lab then. Geek bonus points: one of these Fridays, a future queens university educated engineer asking me whether i had written all these pages they browsed through in the searchable catalogue of eventually 1500 links. Well, dynamic web pages were not common at all in education in those days, and the credit goes to Gossamer Threads.).

While there was hope to collect a comprehensive teaching resource through collaboration, “der Weg war das Ziel”, having students interact with and review foreign language web content. The links database remained definitely, as it grow in bursts revolving around the topics of our chapters. I had a lot of fun finding instructional ways to having students review all those fancy web applications in which endless amounts of money were poured before the first bubble in this millennium burst. E.g. the first early online city maps for “Wegbeschreibung” in German 102. as well web 2.0 like developments, like grassroots web cams (Germans allowing the world to spy on their surroundings 24/7, including remote camera panning – you could go all kinds of places, “”Wie heißt der bürgermeister von Wesel? was macht das wetter in der Schweizzzzzz?” but alas, the time lag, especially during winter term.

A couple of screen casts for instructor training are here and here.

How to access trprecord.exe

In the interpreting suite, students can click  , click

, click  , paste “\\lgu.ac.uk\lgu$\multimedia student\mmedia\mmedia1\language_services\software\trprecord.exe”, click

, paste “\\lgu.ac.uk\lgu$\multimedia student\mmedia\mmedia1\language_services\software\trprecord.exe”, click  . More help.

. More help.